Does AI Content Actually Rank? A SaaS Case Study with Real Search Data

The debate around AI-generated content SEO has produced more noise than signal. Ask five marketers whether AI content works and you’ll get five different answers usually based on gut instinct, a blog post they half-read, or a single anecdotal win or loss. What the conversation has been missing is actual performance data from a real company operating in a competitive SaaS market.

Over the last 12 months, thats what Bohu has created. We tracked organic search performance across two distinct content types on a mid-market SaaS platform AI written blog content and traditionally produced human content with trained SEO writers covering over 70 published pieces and millions of search impressions. The findings challenge some widely held assumptions. Let’s get into them.

Does Google Penalize AI-Generated Content?

No, and the official record on this is clear. Google’s position, published directly in their Search Central documentation, draws a hard line between two types of AI content: content created to help users, and content created to game rankings. The former is fine. The latter violates spam policies regardless of whether a human or a machine wrote it.

Google’s official guidance states that using automation, including AI, to generate content with the primary purpose of manipulating ranking in search results violates our spam policies,’ but follows that immediately with the clarification that ‘not all use of automation, including AI generation, is spam.’

That distinction matters. Google’s March 2024 core update reinforced it in practice. By late April 2024, Google reported a 45% reduction in low-quality, unoriginal content appearing in search results, a number that reflects action taken against mass-produced spam, not against quality AI blog posts produced with intent and editorial oversight.

The practical takeaway for SaaS content teams: AI content quality is the variable that determines performance, not AI authorship itself. That reframing matters enormously for how you build your content program.

What the Data Actually Shows

Across the 12-month study period, the graph below shows the performance split between the AI generated content and human written. Chart 1 breaks down the three headline metrics side by side.

Chart 1: Overall performance metrics — AI content vs. human content (March 2025–March 2026)

The AI content pulled 9,323 clicks across 27 pieces at an average position of 8.4. The 46+ human-produced pieces generated roughly 3,200 clicks at an average position of 22.1. At first glance, the AI content looks like a clear winner. But this is exactly where most analyses go wrong, taking the surface numbers at face value without asking why they differ. Once you control for the most important variable, the story shifts significantly.

The Flawed Comparison Flooding Your LinkedIn Feed

Before getting into what the data shows, it’s worth naming a problem with how most AI-versus-human content comparisons are conducted. Bad methodology is producing bad conclusions, and those conclusions are shaping real content strategy decisions.

The most common version of this analysis goes something like: ‘We published X AI articles and Y human articles, measured clicks and rankings after 90 days, and here’s what we found.’ The chart gets shared. People form opinions. The problem is that this comparison is fundamentally flawed if no one controlled for what keywords each content type was targeting.

Keyword competition and search intent are the dominant variables in organic search performance, not content authorship. A piece targeting ‘what is event management’ and a piece targeting ‘best event management software’ are not the same type of race. One is a jog on a flat track. The other is a hill sprint against Cvent, G2, and Eventbrite with millions in domain authority behind them. Comparing their click and ranking results as though authorship explains the difference isn’t analysis. It’s noise dressed up as data.

This is exactly the flaw embedded in most AI content studies circulating right now. When AI content ‘outperforms’ human content in these comparisons, it’s almost always because the AI content was pointed at informational queries definitional, low-competition, high-volume questions where any well-structured page can rank. Human content, meanwhile, is typically doing the harder commercial work. The comparison is not apples to apples. It’s not even the same fruit.

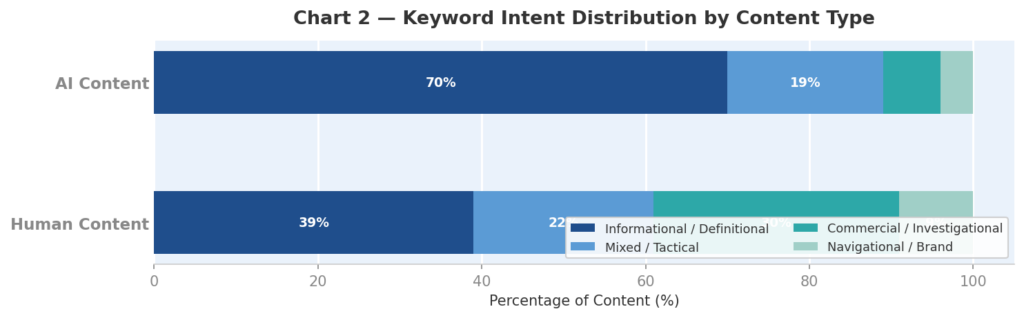

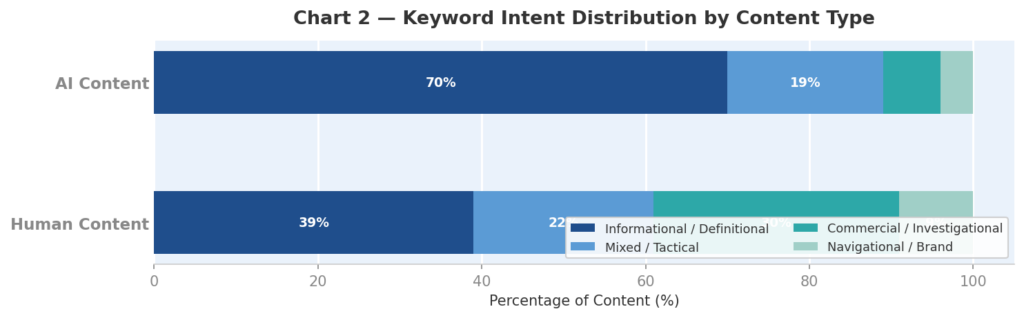

Our data makes this concrete. Chart 2 shows the keyword intent breakdown for each content type across the 12-month study period.

Chart 2: Keyword intent distribution — AI content is 70% informational vs. 39% for human content

In our study, AI blog content targeted informational and definitional queries 70% of the time framework breakdowns, planning guides, and definitional how-tos. Human-produced content directed 30% of its effort toward commercial investigational queries: software comparisons, registration platform roundups, and product-level pages competing against established enterprise vendors.

Keyword intent accounts for approximately 70% of the performance difference in this dataset. The AI pages ranked positions 4–6 because they answered encyclopedic questions with minimal competition. The human pages sat at positions 18–42 because they were fighting for the most commercially valuable real estate on the internet where domain authority is the deciding factor. The human content wasn’t underperforming. It was competing at a fundamentally higher difficulty level, and any analysis that ignores this distinction is drawing conclusions from a broken premise.

Do AI and Human Content Perform the Same When Conditions Match?

Yes, and this is the critical finding. When you strip away the keyword difficulty variable and compare both content types at equivalent ranking positions, the CTR data overlaps substantially.

However, it is important to note that the Bohu team spent months training our AI writers to write in accordance with E-E-A-T. This study proves that AI itself isn’t a hindrance, so if you jump into ChatGPT and ask it to write an “SEO Article about X topic” and it doesn’t perform, you can rest assured it’s not the AI itself that’s holding you back. Human and AI content perform the same when they follow E-E-A-T guidelines.

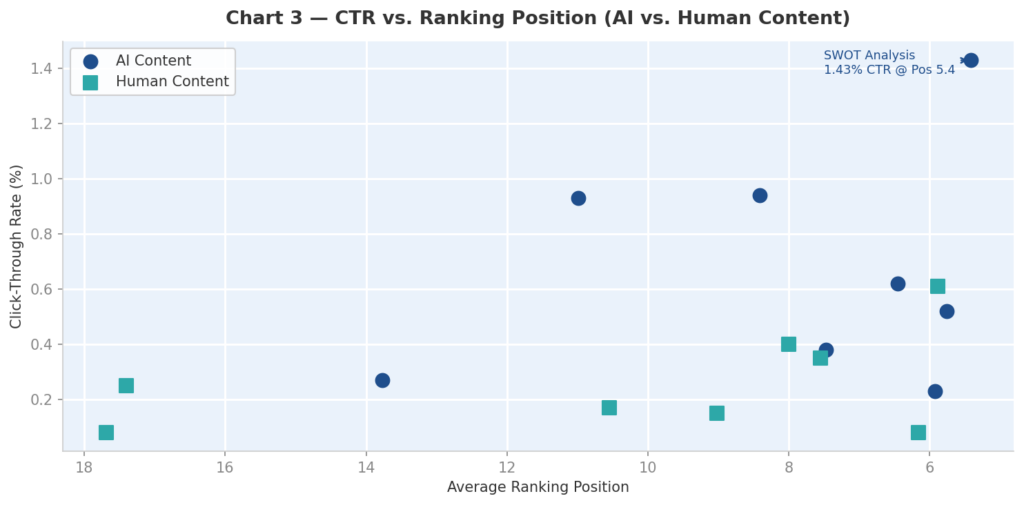

Chart 3 plots CTR against ranking position for both content types across all measured pages.

Chart 3: CTR vs. ranking position — at equivalent positions, AI and human content perform comparably

At positions 5–6, an AI blog piece on a structured framework topic achieved 1.43% CTR — a strong result driven by title-query alignment. A non-AI piece at position 5.89 on a niche product topic pulled 0.61%. Both are reasonable performers for their position band, and the variation reflects title construction and query match, not content authorship.

At positions 7–10, the picture tightens further. AI and non-AI pages produced CTRs ranging from 0.15% to 0.94% — a range explained by title format and query specificity rather than who (or what) wrote the content.

The data is unambiguous: when ranking position is held constant, AI content ranking performance and human content performance are broadly comparable. Authorship isn’t the ranking signal. Quality, relevance, and keyword targeting are.

What Makes Content Rank Well for SaaS Companies

Understanding that AI content can rank is step one. Understanding the conditions under which it succeeds is step two and this is where the data surfaces three specific patterns worth building around.

List and Definition Formats Drive Outsized CTR

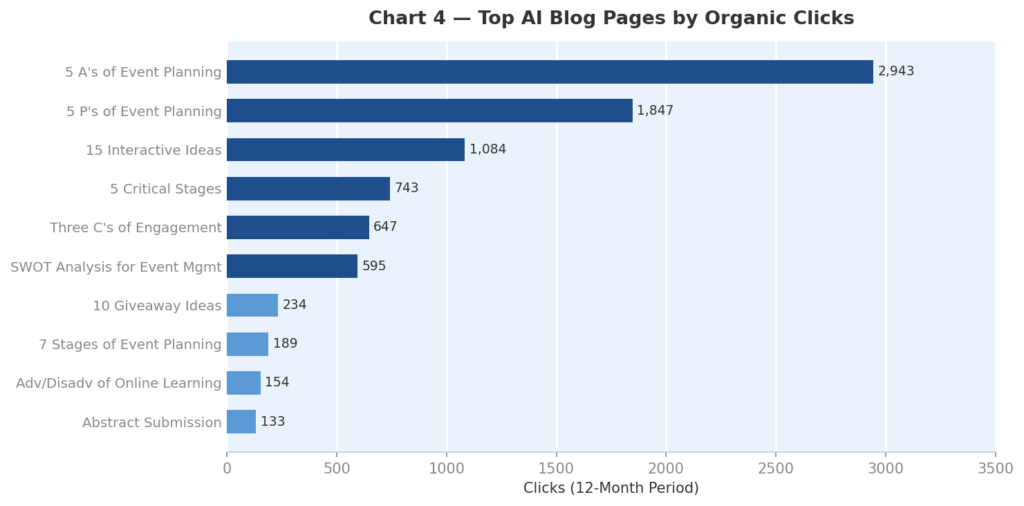

The highest-performing AI pieces in the study shared a structural trait: their titles were near-exact matches to their primary search queries, and their formats — structured breakdowns of defined frameworks or numbered idea lists — told the reader exactly what they were getting before they clicked. Chart 4 shows how dramatically click volume concentrates in these list and definition-style pieces.

Chart 4: Top AI blog pages by organic clicks — list and definition formats dominate

Three of the top four pages follow a numbered framework format (‘5 A’s,’ ‘5 P’s,’ ‘5 Critical Stages’). The SWOT Analysis piece earned the highest CTR in the dataset at 1.43%, driven by near-perfect title-to-query alignment. Numbered lists and definitional titles reduce friction in the decision to click — for SaaS companies building AI blog posts, this isn’t a design suggestion. It’s a conversion lever at the top of the funnel.

Semantic Coverage Compounds Over Time

The top-performing AI piece in the study appeared in results for over 400 distinct queries over the 12-month period. This is the compounding advantage of well-structured informational content: a single, thoroughly written piece captures long-tail organic traffic AI content across dozens of related variations without additional investment.

For SaaS content teams under resource pressure, this is the core efficiency argument for AI content scalability. One well-researched, properly structured piece — built on solid keyword research and edited with human oversight — can perform the work of many.

Informational Content Builds the Foundation Commercial Content Needs

Here’s where the data reveals a strategic gap that many SaaS companies share: the two content types were operating in silos. High-traffic informational pages ranking positions 2–6 were not passing internal link equity to the commercial pages stalled at positions 18–42.

A commercial software comparison page sitting at position 23 needs both external authority and internal links from pages that Google already trusts. When the informational content and the commercial content aren’t connected in an internal linking strategy, you’re leaving compounding value on the table. This is the actual opportunity the data surfaces — not a question of AI versus human, but of how the two content types work together as a system.

What E-E-A-T Means for AI Content in SaaS

E-E-A-T AI content requirements Experience, Expertise, Authoritativeness, and Trustworthiness are frequently cited as the reason AI content can’t compete. The argument is that AI lacks lived experience and therefore can’t satisfy Google’s quality standards. This is partially right and substantially overstated.

Google confirms that content must be original, useful, and written for people, not just algorithms, and that E-E-A-T remains essential, especially experience-led content that shows firsthand knowledge. AI-generated content is allowed, but human oversight and authenticity are required. That emphasis on human oversight is the operative phrase.

For SaaS companies, E-E-A-T signals come from structure and source: clear authorship, domain expertise reflected in the writing, accurate claims, and internal consistency with what the brand actually does. A well-prompted AI draft written by an Agent that is trained for clarity and conciseness, reviewed and refined by a subject matter expert, can satisfy these requirements. Raw, unedited AI output generic, hedged, and factually uncertain, typically cannot. Also worth mentioning, raw copy and paste from a chatbot is typically where we see companies run into spam and indexing issues. Be especially weary of brand chatbots. We consistently see agents trained on the brand voice and tone, but not trained on SEO writing ruin content performance.

The distinction isn’t AI versus human. It’s accountable content versus anonymous content. Assign authorship. Fact-check claims. Write with clarity (regardless of author), and offer a unique perspective. Those are the practical levers for AI content authenticity regardless of which tool drafted the first version.

The Risks Worth Taking Seriously

Acknowledging that AI content can rank doesn’t mean the risks are overstated. They aren’t.

AI content hallucination this is the biggest issue for SaaS companies specifically. AI tools confidently generate inaccurate product comparisons, incorrect pricing, and fabricated feature claims if asked to do so in an outline without having been provided that information beforehand. Without tight audience targeting, AI is also prone to talking to different audiences in the same post, which is an immediate non-starter for Google.

Scaled content abuse is the second risk worth naming. Google’s March 2024 update aimed to reduce unoriginal and unhelpful content in search results by 40%, specifically targeting content that feels mass-produced, repetitive, or written to rank instead of to help. Publishing high volumes of thin, templated AI content, even if technically unique, will eventually attract algorithmic scrutiny. Volume is not a strategy. Volume of quality content is.

Duplicate content AI risk is real but manageable. AI tools trained on similar datasets tend to produce similar sentences, which can trigger over-similarity issues when published at scale. The solution is differentiation at the input level: use proprietary data, original research, or brand-specific context to give the model something to work with that generic outputs can’t replicate.

How to Build an AI Content Workflow That Actually Performs

The gap between AI content ranking and AI content failing is almost always found in the inputs and the process not in the technology itself. Here’s what the data suggests about building a content program that compounds:

Start with keyword research that reflects intent. The AI pieces that drove traffic targeted queries with clear, high-volume intent and realistic ranking potential. That wasn’t an accident, it was a deliberate targeting decision by Bohu and apart of a broader topical strategy aimed at establishing authority. Search intent alignment at the research stage determines whether your content has a realistic path to page one before a single word is written.

Use proprietary context to differentiate the output. Generic prompts produce generic content. SaaS companies have access to product data, customer use cases, industry benchmarks, and subject matter experts that no AI tool has by default. Feeding that context into your AI content workflow through structured prompts, brand guidelines, or retrieval-augmented approaches is what separates content that reads like a thought leader from content that reads like no one wrote it. Most of the websites we work with already have great content on them. We use RAG to train our writer for context, or we feed it interview notes or transcripts.

Build the internal linking bridge between informational and commercial. Informational posts build authority for your business. Map your top-traffic informational pieces to the commercial pages through contextual links so they support and build a topical link network. This is one of the highest-leverage actions most SaaS content teams aren’t taking.

Edit for readability, not just accuracy. AI writing is often dry, repetitive, and lacks nuance. A subject matter expert should refine it adding personality, original perspective, and genuine opinion. Readability isn’t a soft metric. Content that holds attention signals engagement to Google’s systems, influencing how content is evaluated over time.

AI Visibility and the importance for Saas companies

No discussion of AI content for SEO in 2026 is complete without addressing AI Overviews and Generative Engine Optimization (or AEO, take your pick). AI Search is reshaping how informational queries resolve and SaaS companies that optimize only for traditional blue-link rankings are optimizing for a shrinking surface.

Research shows AI Overviews appear in up to 23% of technology-related queries while commercial searches remain well below 6%. For SaaS content teams, this split is instructive. Your informational content needs to be structured for citation in AI Overviews on Google and AI platforms generally – concise answers, clear headings, factual precision. Good news, all things that will make your content great for SEO also works pretty well for GEO. Your commercial content still competes primarily in traditional SERP positions, where ranking factors haven’t fundamentally changed.

This client in particular, is a massive success for AI Visibility. We radically increased both citation and brand mentions across AI platforms. Thats not what this article is about, but its worth mentioning because getting these concepts right works in both places.

Ready to Build a Content Program That Performs?

The data makes the case clearly: AI content works when the strategy is right. Product Strategy, Keyword targeting, content quality, editorial oversight, and internal architecture matter more than whether a human or a machine produced the first draft.

If you’re a SaaS company running content at any scale, Bohu Digital builds AI-assisted content programs grounded in proprietary performance data and structured around the factors that actually drive rankings. Our AI-assisted content performs without breaking the bank. Reach out for a consultation and let’s look at what your current content program is, and isn’t, doing.